Keyword: computer-vision

2025

Euclid Quick Data Release (Q1). Active galactic nuclei identification using diffusion-based inpainting of Euclid VIS images

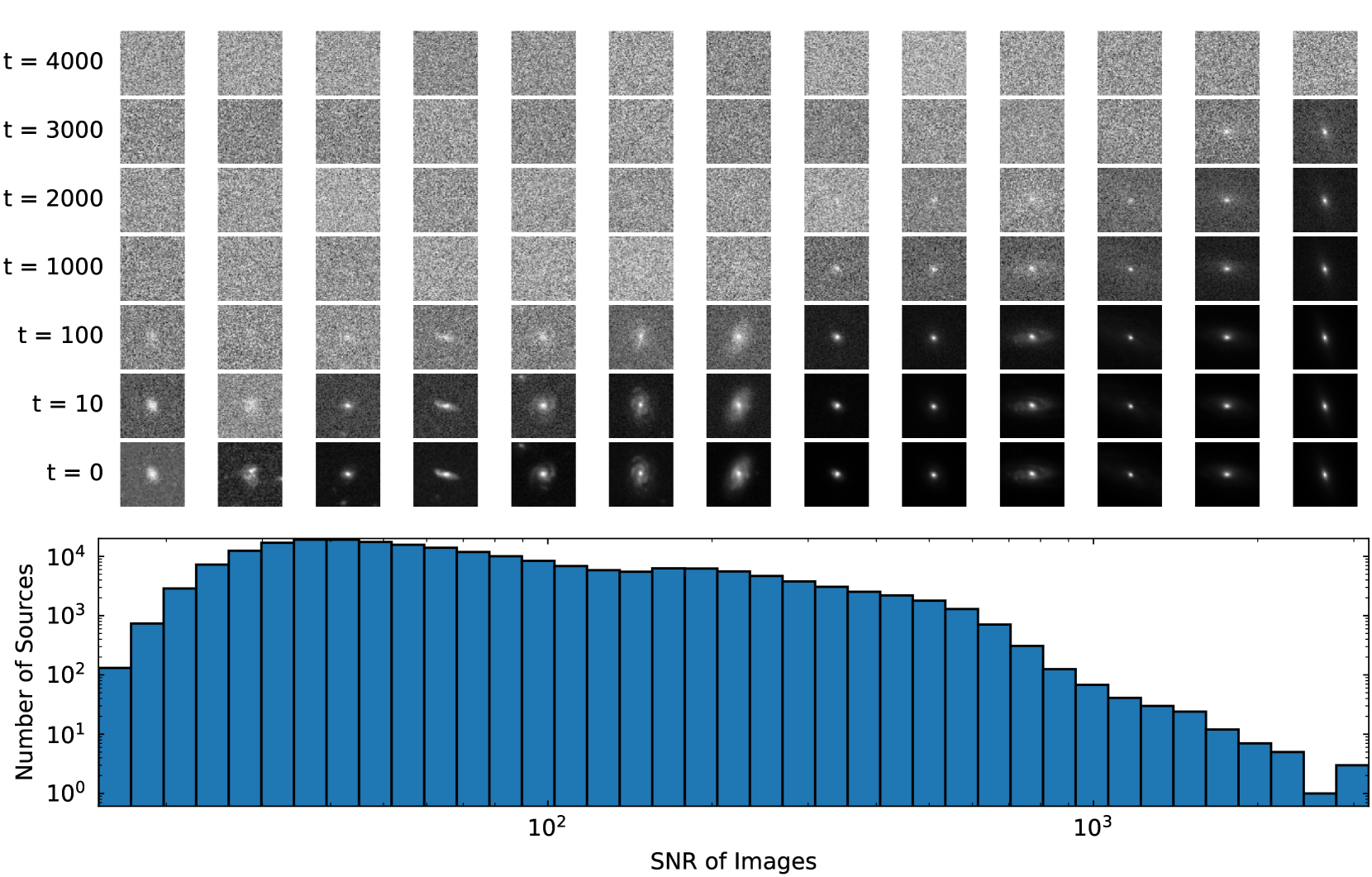

Astronomy & AstrophysicsLight emission from galaxies exhibit diverse brightness profiles, influenced by factors such as galaxy type, structural features and interactions with other galaxies. Elliptical galaxies feature more uniform light distributions, while spiral and irregular galaxies have complex, varied light profiles due to their structural heterogeneity and star-forming activity. In addition, galaxies with an active galactic nucleus (AGN) feature intense, concentrated emission from gas accretion around supermassive black holes, superimposed on regular galactic light, while quasi-stellar objects (QSO) are the extreme case of the AGN emission dominating the galaxy. The challenge of identifying AGN and QSO has been discussed many times in the literature, often requiring multi-wavelength observations. This paper introduces a novel approach to identify AGN and QSO from a single image. Diffusion models have been recently developed in the machine-learning literature to generate realistic-looking images of everyday objects. Utilising the spatial resolving power of the Euclid VIS images, we created a diffusion model trained on one million sources, without using any source pre-selection or labels. The model learns to reconstruct light distributions of normal galaxies, since the population is dominated by them. We condition the prediction of the central light distribution by masking the central few pixels of each source and reconstruct the light according to the diffusion model. We further use this prediction to identify sources that deviate from this profile by examining the reconstruction error of the few central pixels regenerated in each source's core. Our approach, solely using VIS imaging, features high completeness compared to traditional methods of AGN and QSO selection, including optical, near-infrared, mid-infrared, and X-rays.@misc{stevens2025EuclidInpaintingAGN, author = {{Stevens}, G. and {Fotopoulou}, S. and {Bremer}, M.~N. and {Matamoro Zatarain}, T. and {Jahnke}, K. and {Margalef-Bentabol}, B. and {Huertas-Company}, M. and {Smith}, M.~J. and {Walmsley}, M. and {Salvato}, M. and {Mezcua}, M. and {Paulino-Afonso}, A. and {Siudek}, M. and {Talia}, M. and {Ricci}, F. and {Roster}, W. and the {Euclid Collaboration}.}, title = "{Euclid Quick Data Release (Q1). Active galactic nuclei identification using diffusion-based inpainting of Euclid VIS images}", journal={Astronomy \& Astrophysics}, year={2025}, publisher={EDP sciences}, DOI="10.1051/0004-6361/202554612", }

Modern astronomical surveys, such as the Euclid mission, produce high-dimensional, multi-modal data sets that include imaging and spectroscopic information for millions of galaxies. These data serve as an ideal benchmark for large, pre-trained multi-modal models, which can leverage vast amounts of unlabelled data. In this work, we present the first exploration of Euclid data with AstroPT, an autoregressive multi-modal foundation model trained on approximately 300000 optical and infrared Euclid images and spectral energy distributions (SEDs) from the first Euclid Quick Data Release. We compare self-supervised pre-training with baseline fully supervised training across several tasks: galaxy morphology classification; redshift estimation; similarity searches; and outlier detection. Our results show that: (a) AstroPT embeddings are highly informative, correlating with morphology and effectively isolating outliers; (b) including infrared data helps to isolate stars, but degrades the identification of edge-on galaxies, which are better captured by optical images; (c) simple fine-tuning of these embeddings for photometric redshift and stellar mass estimation outperforms a fully supervised approach, even when using only 1% of the training labels; and (d) incorporating SED data into AstroPT via a straightforward multi-modal token-chaining method improves photo-z predictions, and allow us to identify potentially more interesting anomalies (such as ringed or interacting galaxies) compared to a model pre-trained solely on imaging data.@article{Siudek2025EuclidFoundation, author = {{Siudek}, M. and {Huertas-Company}, M. and {Smith}, M. and {Martinez-Solaeche}, G. and {Lanusse}, F. and {Ho}, S. and {Angeloudi}, E. and {Cunha}, P.~A.~C. and {Domínguez Sánchez}, H. and {Dunn}, M. and {Fu}, Y. and {Iglesias-Navarro}, P. and {Junais}, J. and {Knapen}, J.~H. and {Laloux}, B. and {Mezcua}, M. and {Roster}, W. and {Stevens}, G. and {Vega-Ferrero}, J. and the {Euclid Collaboration}.}, title = "{Euclid Quick Data Release (Q1) Exploring galaxy properties with a multi-modal foundation model}", journal={Astronomy \& Astrophysics}, year={2025}, publisher={EDP sciences}, DOI= "10.1051/0004-6361/202554611", }

Modern astronomical surveys, such as the Euclid mission, produce high-dimensional, multi-modal data sets that include imaging and spectroscopic information for millions of galaxies. These data serve as an ideal benchmark for large, pre-trained multi-modal models, which can leverage vast amounts of unlabelled data. In this work, we present the first exploration of Euclid data with AstroPT, an autoregressive multi-modal foundation model trained on approximately 300000 optical and infrared Euclid images and spectral energy distributions (SEDs) from the first Euclid Quick Data Release. We compare self-supervised pre-training with baseline fully supervised training across several tasks: galaxy morphology classification; redshift estimation; similarity searches; and outlier detection. Our results show that: (a) AstroPT embeddings are highly informative, correlating with morphology and effectively isolating outliers; (b) including infrared data helps to isolate stars, but degrades the identification of edge-on galaxies, which are better captured by optical images; (c) simple fine-tuning of these embeddings for photometric redshift and stellar mass estimation outperforms a fully supervised approach, even when using only 1% of the training labels; and (d) incorporating SED data into AstroPT via a straightforward multi-modal token-chaining method improves photo-z predictions, and allow us to identify potentially more interesting anomalies (such as ringed or interacting galaxies) compared to a model pre-trained solely on imaging data.@article{Siudek2025EuclidFoundation, author = {{Siudek}, M. and {Huertas-Company}, M. and {Smith}, M. and {Martinez-Solaeche}, G. and {Lanusse}, F. and {Ho}, S. and {Angeloudi}, E. and {Cunha}, P.~A.~C. and {Domínguez Sánchez}, H. and {Dunn}, M. and {Fu}, Y. and {Iglesias-Navarro}, P. and {Junais}, J. and {Knapen}, J.~H. and {Laloux}, B. and {Mezcua}, M. and {Roster}, W. and {Stevens}, G. and {Vega-Ferrero}, J. and the {Euclid Collaboration}.}, title = "{Euclid Quick Data Release (Q1) Exploring galaxy properties with a multi-modal foundation model}", journal={Astronomy \& Astrophysics}, year={2025}, publisher={EDP sciences}, DOI= "10.1051/0004-6361/202554611", }

2024

Euclid preparation - XLIII. Measuring detailed galaxy morphologies for Euclid with machine learning

Astronomy & AstrophysicsThe Euclid mission is expected to image millions of galaxies at high resolution, providing an extensive dataset with which to study galaxy evolution. Because galaxy morphology is both a fundamental parameter and one that is hard to determine for large samples, we investigate the application of deep learning in predicting the detailed morphologies of galaxies in Euclid using Zoobot, a convolutional neural network pretrained with 450 000 galaxies from the Galaxy Zoo project. We adapted Zoobot for use with emulated Euclid images generated based on Hubble Space Telescope COSMOS images and with labels provided by volunteers in the Galaxy Zoo: Hubble project. We experimented with different numbers of galaxies and various magnitude cuts during the training process. We demonstrate that the trained Zoobot model successfully measures detailed galaxy morphology in emulated Euclid images. It effectively predicts whether a galaxy has features and identifies and characterises various features, such as spiral arms, clumps, bars, discs, and central bulges. When compared to volunteer classifications, Zoobot achieves mean vote fraction deviations of less than 12% and an accuracy of above 91% for the confident volunteer classifications across most morphology types. However, the performance varies depending on the specific morphological class. For the global classes, such as disc or smooth galaxies, the mean deviations are less than 10%, with only 1000 training galaxies necessary to reach this performance. On the other hand, for more detailed structures and complex tasks, such as detecting and counting spiral arms or clumps, the deviations are slightly higher, of namely around 12% with 60 000 galaxies used for training. In order to enhance the performance on complex morphologies, we anticipate that a larger pool of labelled galaxies is needed, which could be obtained using crowd sourcing. We estimate that, with our model, the detailed morphology of approximately 800 million galaxies of the Euclid Wide Survey could be reliably measured and that approximately 230 million of these galaxies would display features. Finally, our findings imply that the model can be effectively adapted to new morphological labels. We demonstrate this adaptability by applying Zoobot to peculiar galaxies. In summary, our trained Zoobot CNN can readily predict morphological catalogues for Euclid images.@article{ Aussel2024euclid, title={Euclid preparation-XLIII. Measuring detailed galaxy morphologies for Euclid with machine learning}, author={{Aussel}, B. and {Kruk}, S. and {Walmsley}, M. and {Castellano}, M. and {Conselice}, C.J. and {Delli Veneri}, M. and {Dominguez Sanchez}, H. and {Duc}, P.-A. and {Knapen}, J.H. and {Kuchner}, U. and {La Marca}, A. and {Margalef-Bentabol}, B. and {Marleau}, F.R. and {Stevens}, G. and {Toba}, Y. and {Tortora}, C. and {Wang}, L. and the {Euclid Collaboration}}, journal={Astronomy \& Astrophysics}, volume={689}, pages={A274}, year={2024}, publisher={EDP sciences}, DOI= "10.1051/0004-6361/202449609", }